At work, we have to handle and process many types of (sometimes archaic) financial protocols. One such protocol, Image Cash Letter (ICL, also known as ANSI DSTU X9.37 or X9.100-180), describes how cheques (or checks, for the US folks) are transmitted electronically between financial institutions.

X9.37 is one such archaic binary protocol still in use today. The specification allows this file to be encoded either in the 8-bit IBM EBCDIC encoding or ASCII, and it contains both plain-text characters as well as TIFF image data. In Scala, one of the best ways to parse such protocols is to use a wonderful library called scodec, a combinator library for creating codecs for binary data. I recommend reading about the library and getting familiarized with the syntax before reading further.

The problem

Since both encodings are 8-bit, there’s no way to distinguish between the files by size or contents. Fortunately, the specification explains how to detect the encoding:

The coding scheme may be verified by inspecting the first two characters of

the X9.37 file that have the value ‘01’ (File Header Record Type 01, Field 1).The value ‘01’ is defined in EBCDIC with the hexadecimal value ‘F0F1’

and in ASCII with the hexadecimal value ‘3031’.

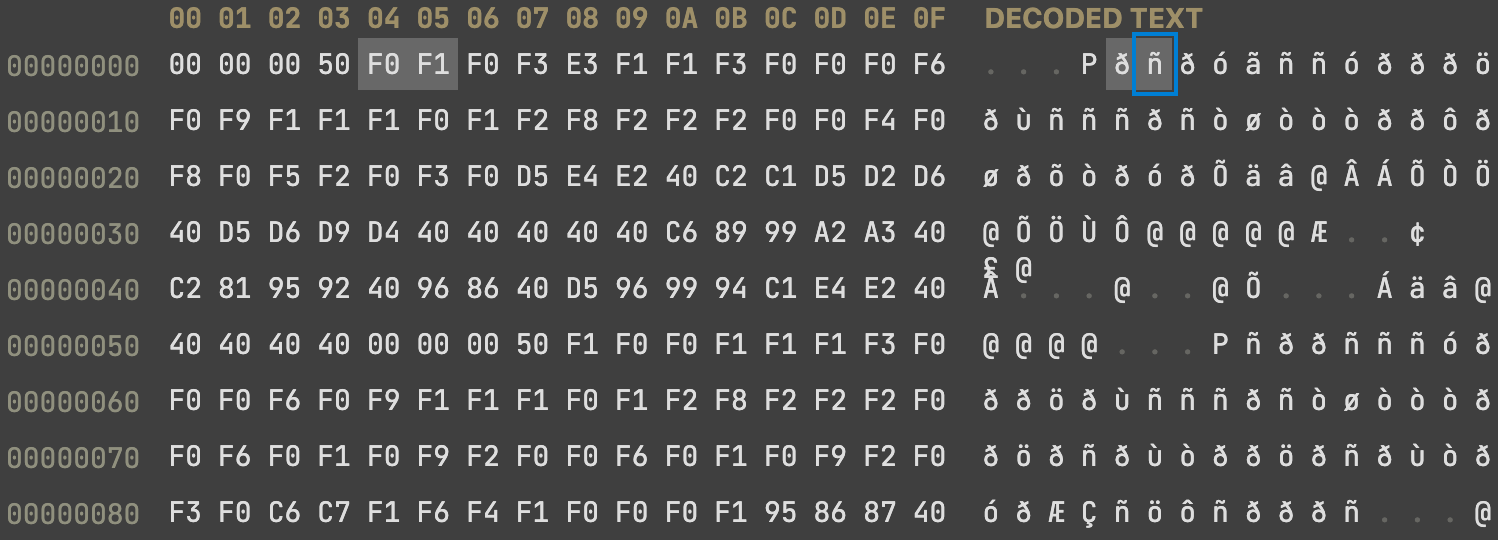

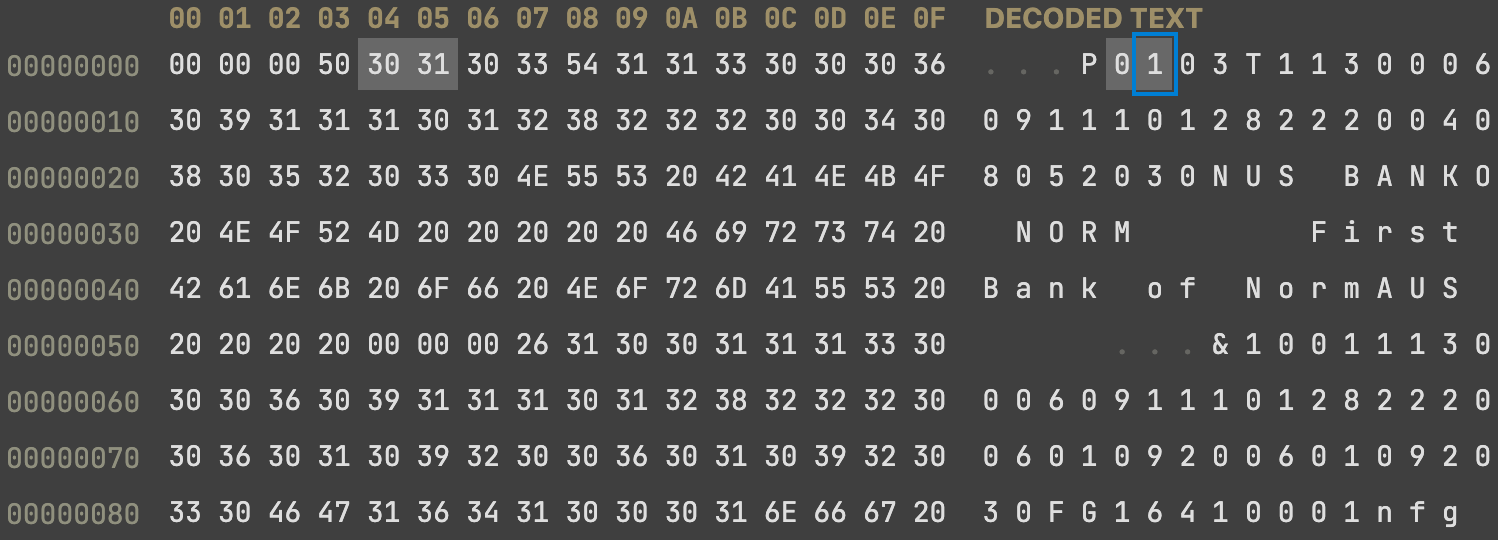

And indeed, inspecting such files in a hex editor reveals this difference:

| EBCDIC | ASCII |

|---|---|

|

|

Both values are located after the first 32-bit integer value specifying the File Header Record length (always 0x50).

The solution

Armed with this knowledge, we can create a Codec to detect this encoding and expose it as a java.nio.charset.Charset. Fortunately, many scodec string combinators take Charset as an implicit parameter, allowing us to specify the encoding with which we wish to read the strings! The inspiration for this idea came from a similar solution of detecting byte ordering (endianness) in libpcap files.

We create the codec for Charset that tries to decode the two bytes and match them against a known pattern:

import java.nio.charset.{Charset, StandardCharsets} |

This codec will now be passed implicitly to all other codecs requiring an implicit Charset, allowing us to decode the strings correctly for both encoding types!

Bonus: decoding a list of unknown length

In the most simplified form, the X9.37 protocol contains record sections, and each section contains a Header record, followed by several data records and a Control record containing checksums and other verification information. Here’s an example in ASCII art form:

┌────────────────────────┬────────────────────────┐ |

Unfortunately, the protocol specifies neither in the Header nor Control records the actual number of these Cash Letter records, and all scodec combinators I found that deal with lists expect to take a number specifying the number of items to decode.

After a few iterations, I came up with the following function that tries to read a list of unknown size, and when it fails - returns the number of items it read so far:

/** |

This did the job (and also got a thumbs up from Michael Pilquist, the creator of scodec, on the scodec Discord!

Finally, we can parse the X9.37 file using this top-level definition:

case class X937File( |

Implementations of FileHeader, CashLetter, FileControl and others are left as an exercise to the reader 😄

Happy parsing!